vignettes/dbplyr.RmdAs well as working with local in-memory data stored in data frames, dplyr also works with remote on-disk data stored in databases. This is particularly useful in two scenarios:

Figure 3: dplyr leftjoin Function. The difference to the innerjoin function is that leftjoin retains all rows of the data table, which is inserted first into the function (i.e. Have a look at the R documentation for a precise definition: Example 3: rightjoin dplyr R Function. Right join is the reversed brother of left join.

- Dplyr fulljoin In a full join, R data frame objects are merged together with the dplyr function fulljoin. Corresponding rows with a matching column value in each data frame are combined into one row of a new data frame, and non-matching rows are also added to the resultant data frame with NA s for the missing information. Dplyr ‘s fulljoin function will perform a full join, where non-matching rows are.

- Currently dplyr supports four types of mutating joins, two types of filtering joins, and a nesting join. Mutating joins combine variables from the two data.frames: innerjoin return all rows from x where there are matching values in y, and all columns from x and y. If there are multiple matches between x and y, all combination of the matches are returned.

- Remember: Please Join our RBootcamp OHSU Group! We've been looking at datasets that fit the ggplot2 paradigm nicely; however, most data we encounter is really messy (missing values), or is a completely different format. In this chapter, we'll look at one of the most powerful tools in the tidyverse: dplyr, which lets you manipulate data frames.There is a function/action for most of the.

Your data is already in a database.

You have so much data that it does not all fit into memory simultaneously and you need to use some external storage engine.

(If your data fits in memory there is no advantage to putting it in a database: it will only be slower and more frustrating.)

This vignette focuses on the first scenario because it’s the most common. If you’re using R to do data analysis inside a company, most of the data you need probably already lives in a database (it’s just a matter of figuring out which one!). However, you will learn how to load data in to a local database in order to demonstrate dplyr’s database tools. At the end, I’ll also give you a few pointers if you do need to set up your own database.

Anti Join Dplyr

Getting started

To use databases with dplyr you need to first install dbplyr:

You’ll also need to install a DBI backend package. The DBI package provides a common interface that allows dplyr to work with many different databases using the same code. DBI is automatically installed with dbplyr, but you need to install a specific backend for the database that you want to connect to.

Five commonly used backends are:

RMariaDB connects to MySQL and MariaDB

RPostgres connects to Postgres and Redshift.

RSQLite embeds a SQLite database.

odbc connects to many commercial databases via the open database connectivity protocol.

bigrquery connects to Google’s BigQuery.

If the database you need to connect to is not listed here, you’ll need to do some investigation (i.e. googling) yourself.

In this vignette, we’re going to use the RSQLite backend which is automatically installed when you install dbplyr. SQLite is a great way to get started with databases because it’s completely embedded inside an R package. Unlike most other systems, you don’t need to setup a separate database server. SQLite is great for demos, but is surprisingly powerful, and with a little practice you can use it to easily work with many gigabytes of data.

Connecting to the database

To work with a database in dplyr, you must first connect to it, using DBI::dbConnect(). We’re not going to go into the details of the DBI package here, but it’s the foundation upon which dbplyr is built. You’ll need to learn more about if you need to do things to the database that are beyond the scope of dplyr.

The arguments to DBI::dbConnect() vary from database to database, but the first argument is always the database backend. It’s RSQLite::SQLite() for RSQLite, RMariaDB::MariaDB() for RMariaDB, RPostgres::Postgres() for RPostgres, odbc::odbc() for odbc, and bigrquery::bigquery() for BigQuery. SQLite only needs one other argument: the path to the database. Here we use the special string ':memory:' which causes SQLite to make a temporary in-memory database.

Most existing databases don’t live in a file, but instead live on another server. That means in real-life that your code will look more like this:

(If you’re not using RStudio, you’ll need some other way to securely retrieve your password. You should never record it in your analysis scripts or type it into the console. Securing Credentials provides some best practices.)

Our temporary database has no data in it, so we’ll start by copying over nycflights13::flights using the convenient copy_to() function. This is a quick and dirty way of getting data into a database and is useful primarily for demos and other small jobs.

As you can see, the copy_to() operation has an additional argument that allows you to supply indexes for the table. Here we set up indexes that will allow us to quickly process the data by day, carrier, plane, and destination. Creating the right indices is key to good database performance, but is unfortunately beyond the scope of this article.

Now that we’ve copied the data, we can use tbl() to take a reference to it:

When you print it out, you’ll notice that it mostly looks like a regular tibble:

Dplyr Join Cheat Sheet Excel

The main difference is that you can see that it’s a remote source in a SQLite database.

Generating queries

To interact with a database you usually use SQL, the Structured Query Language. SQL is over 40 years old, and is used by pretty much every database in existence. The goal of dbplyr is to automatically generate SQL for you so that you’re not forced to use it. However, SQL is a very large language and dbplyr doesn’t do everything. It focusses on SELECT statements, the SQL you write most often as an analyst.

Most of the time you don’t need to know anything about SQL, and you can continue to use the dplyr verbs that you’re already familiar with:

However, in the long-run, I highly recommend you at least learn the basics of SQL. It’s a valuable skill for any data scientist, and it will help you debug problems if you run into problems with dplyr’s automatic translation. If you’re completely new to SQL you might start with this codeacademy tutorial. If you have some familiarity with SQL and you’d like to learn more, I found how indexes work in SQLite and 10 easy steps to a complete understanding of SQL to be particularly helpful. Mac for word templates.

Video recorder for mac. The most important difference between ordinary data frames and remote database queries is that your R code is translated into SQL and executed in the database on the remote server, not in R on your local machine. When working with databases, dplyr tries to be as lazy as possible:

It never pulls data into R unless you explicitly ask for it.

It delays doing any work until the last possible moment: it collects together everything you want to do and then sends it to the database in one step.

For example, take the following code:

Surprisingly, this sequence of operations never touches the database. It’s not until you ask for the data (e.g. by printing tailnum_delay) that dplyr generates the SQL and requests the results from the database. Even then it tries to do as little work as possible and only pulls down a few rows.

Behind the scenes, dplyr is translating your R code into SQL. You can see the SQL it’s generating with show_query():

If you’re familiar with SQL, this probably isn’t exactly what you’d write by hand, but it does the job. You can learn more about the SQL translation in vignette('translation-verb') and vignette('translation-function').

Typically, you’ll iterate a few times before you figure out what data you need from the database. Once you’ve figured it out, use collect() to pull all the data down into a local tibble:

collect() requires that database does some work, so it may take a long time to complete. Otherwise, dplyr tries to prevent you from accidentally performing expensive query operations:

Because there’s generally no way to determine how many rows a query will return unless you actually run it,

nrow()is alwaysNA.Because you can’t find the last few rows without executing the whole query, you can’t use

tail().

You can also ask the database how it plans to execute the query with explain(). The output is database dependent, and can be esoteric, but learning a bit about it can be very useful because it helps you understand if the database can execute the query efficiently, or if you need to create new indices.

Creating your own database

If you don’t already have a database, here’s some advice from my experiences setting up and running all of them. SQLite is by far the easiest to get started with. PostgreSQL is not too much harder to use and has a wide range of built-in functions. In my opinion, you shouldn’t bother with MySQL/MariaDB: it’s a pain to set up, the documentation is subpar, and it’s less featureful than Postgres. Google BigQuery might be a good fit if you have very large data, or if you’re willing to pay (a small amount of) money to someone who’ll look after your database.

All of these databases follow a client-server model - a computer that connects to the database and the computer that is running the database (the two may be one and the same but usually isn’t). Getting one of these databases up and running is beyond the scope of this article, but there are plenty of tutorials available on the web.

MySQL/MariaDB

In terms of functionality, MySQL lies somewhere between SQLite and PostgreSQL. It provides a wider range of built-in functions. It gained support for window functions in 2018.

PostgreSQL

PostgreSQL is a considerably more powerful database than SQLite. It has a much wider range of built-in functions, and is generally a more featureful database.

BigQuery

BigQuery is a hosted database server provided by Google. To connect, you need to provide your project, dataset and optionally a project for billing (if billing for project isn’t enabled).

It provides a similar set of functions to Postgres and is designed specifically for analytic workflows. Because it’s a hosted solution, there’s no setup involved, but if you have a lot of data, getting it to Google can be an ordeal (especially because upload support from R is not great currently). (If you have lots of data, you can ship hard drives!)

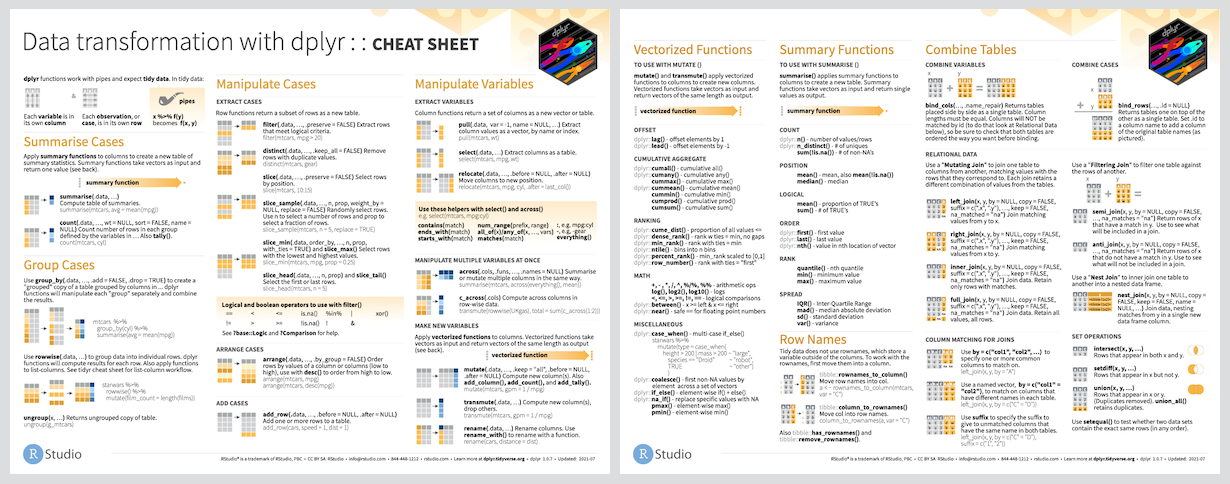

data.table and dplyr cheat-sheet

This is a cheat-sheet on data manipulation using data.table and dplyr package (sqldf will be included soon…) . The package dplyr is an excellent and intuitive tool for data manipulation in R. Due to its intuitive data process steps and a somewhat similar concepts with SQL, dplyr gets increasingly popular. Another reason is that it can be integrated in SparkR seamlessly. Mastering dplyr will be a must if you want to get started with SparkR.

I found this cheat-sheet very useful in using dplyr. My post is inspired by it. I hereby write this cheat sheet for data manipulation with data.table / data.frame and dplyr computation side by side. It is especially useful for those who wants to convert data manipulation style from data.table to dplyr. There are 6 data investigation and manipulation included:

- Summary of data

- subset rows

- subset columns

- summarize data

- group data

- create new data

Select rows that meet logical criteria:

dplyr

data.frame / data.table

Remove duplicate rows:

dplyr

data.table

Randomly select fraction of rows

dplyr

Randomly select n rows

dplyr

data.table / data.frame

Select rows by position

dplyr

data.table / data.frame

Select and order top n entries (by group if group data)

dplyr

data.table

dplyr

data.frame

> iris[c(‘Sepal.Width’,’Petal.Length’,’Species’)]

data.table

Select columns whose name contains a character string

Select columns whose name ends with a character string

Select every column

dplyr

data.frame

Select columns whose name matches a regular expression

Select columns names x1,x2,x3,x4,x5

select(iris, num_range(‘x’, 1:5))

Select columns whose names are in a group of names

Select column whose name starts with a character string

Select all columns between Sepal.Length and Petal.Width (inclusive)

Select all columns except Species.

dplyr

data.frame

The package dplyr allows you to easily compute first, last, nth, n, n_distinct, min, max, mean, median, var, st of a vector as a summary of the table.

Summarize data into single row of values

dplyr

Apply summary function to each column

Note: mean cannot be applied on Factor type.

Count number of rows with each unique value of variable (with or without weights)

dplyr

data.table:

aggregate {stats}

Group data into rows with the same value of Species

dplyr

data.table: this is usually performed with some aggregation computation

Remove grouping information from data frame

dplyr

Compute separate summary row for each group

dplyr

data.frame

data.table

Mutate used window function, function that take a vector of values and return another vector of values, such as:

compute and append one or more new columns

data.frame / data.table

dplyr

Apply window function to each column

dplyr

base

data.table

Compute one or more new columns. Drop original columns

Compute new variable by group.

dplyr

iris %>% group_by(Species) %>% mutate(ave = mean(Sepal.Length))

data.table

iris[, ave:=mean(Sepal.Length), by = Species]

data.frame

You can verify the result df1, df2 using: